Three Functional Roles of the Per-Layer Embedding Gate in Gemma-4 E2B

Gemma-4 E2B's Per-Layer Embedding gate is described in most discussions as a parameter efficiency mechanism, specifically a per-layer lookup table that gives a 2B model some of the representational richness of a larger one. I decided to run a full causal ablation battery across all 35 layers to test this understanding of the mechanism and what I found suggests the general concensus framing may beincomplete about the unit of analysis. The gate contains at least three co-located mechanisms with different magnitudes, alignment properties, domain dependencies, and causal effects, and treating it as one thing produces wrong predictions about which parts are load bearing.

To briefly introduce the 3 layer mental model - Layer 6 correlates with word sense discrimination but its causal contribution turns out to be syntactic and lexical rather than semantic. Removing the specific sense discriminative directions accounts for only 1.9% of L6's ablation damage. Layers 13 and 14 produce the largest effect in the residual stream, and by replacing their gate output with its population mean improves next token NLL by −0.1305 nats (P=1.000 on 501k tokens, BOS-corrected evaluation) on English but hurts on Chinese, making this a domain conditioned result rather than a simple pruning target. Layer 33 is a late-stage output prior whose ablation alone raises NLL by +1.37 and concentrates the damage steeply on rare tokens. The model can survive without its biggest injection, but it produces giberish if you remove this one.

Background: What PLE Actually Is

Before getting into the experiments, it's worth clarifying what the PLE gate actually does architecturally. PLE is a three stage object. Stage 1 is a tokenId-wise lookup, essentially an extra embedding table at each layer. Stage 2 is a tokenId-wise projection which is also a pure function of token identity with no context sensitivity. Stage 3 is the gated injection: gate(h_pre) * p_l, where the gate is a function of the pre layer residual stream and the projection is the token-id-only component from Stages 1 and 2.

The gate is the only context sensitive component. Everything I measured below is the Stage 3 output, which is what actually gets added to the residual stream. This distinction matters because it means the gate can modulate the injection based on what the model has computed so far, not just based on which token it's looking at.

Phase 1: Does the Gate Carry Word-Sense Information?

Note: Readers primarily interested in the causal results can skip to Phase 2.

This section is presented as a methodological arc rather than a core finding. It documents a hypothesis that turned out to be correlational, not causal due to an analytical error. I wanted to include it because I believe it's important to not just show the results, but how this worked developed including the mistakes made throughout. If anything this section can act as a warning about how not to intrepret representation geometry.

I started with a simple question. Does the PLE gate carry word-sense information? English is full of polysemous words. The word bank can mean a riverbank or a financial institution, bat can mean the animal or a piece of sports equipment... you get the idea. If the gate is just a static bias, it shouldn't care about context. The gate vector for the word bank should look the same regardless of whether the sentence is about rivers or money.

I built a POS-matched polysemy test using pairs of sentences where the same word appears with the same part of speech but in different senses. For each occurrence, I extracted the gate vector and measured whether same-sense occurrences cluster together.

The metric is simple. Compute the mean cosine similarity within a sense, divide by the mean cosine similarity across senses. A ratio of 1.0 means the gate can't tell senses apart. Anything above 1.0 means it can.

Layer 6 lit up.

| Layer | Gate Ratio | Residual Stream Ratio | Delta (Gate minus Residual) |

|---|---|---|---|

| L2 | 1.27 | 1.02 | +0.25 |

| L5 | 1.40 | 1.05 | +0.36 |

| L6 | 1.61 | 1.18 | +0.43 |

| L7 | 1.46 | 1.32 | +0.15 |

| L17 | 1.40 | 1.12 | +0.28 |

POS-matched polysemy discrimination. Bootstrap 95% CI for L6 delta: [+0.22, +0.76], P(>0)=1.000. n=15 words, 10,000 bootstrap resamples.

The gate at Layer 6 turns out to carry information beyond what the residual stream already knows. The delta of +0.43 means the gate is adding genuine disambiguation signal, not just echoing what the main computation has already figured out.

I replicated the analysis on the WiC benchmark from SuperGLUE, 512 independent word pairs with controlled same/different sense labels. L6 reproduced as the primary peak and L17 as a secondary peak. From all this, one anomaly surfaced - at L8 the residual stream was more same sense coherent than the gate, an inversion that matches something I had already seen in the polysemy data. Whatever L8's gate was doing, it wasn't agreeing with sense identity. The headline for Phase 1 is that the polysemy signal is real and reproduces on an independent dataset.

At this point, I thought I had my result. The PLE gate is a word sense disambiguation mechanism, strongest at Layer 6, and it adds real information. It felt like a clean story.

It turned out to be a correlation, not a mechanism. Later experiments (described below in the Phase 3 robustness battery) extracted the top-k sense discriminative directions from L6's gate activations via SVD of between sense difference vectors, then tested what happened when those specific directions were removed from the gate output. Removing the top-5 sense directions caused only +0.005 ΔNLL, which is 1.9% of the damage from zeroing L6 entirely. The model barely noticed their removal. What L6 is doing causally is something else: syntactic scaffolding, lexical priors, or positional structure. The word-sense signal at L6 is a correlational by product, not a causal mechanism. Phase 1's measurement of what the gate encodes is valid. The inference about what it does was not.

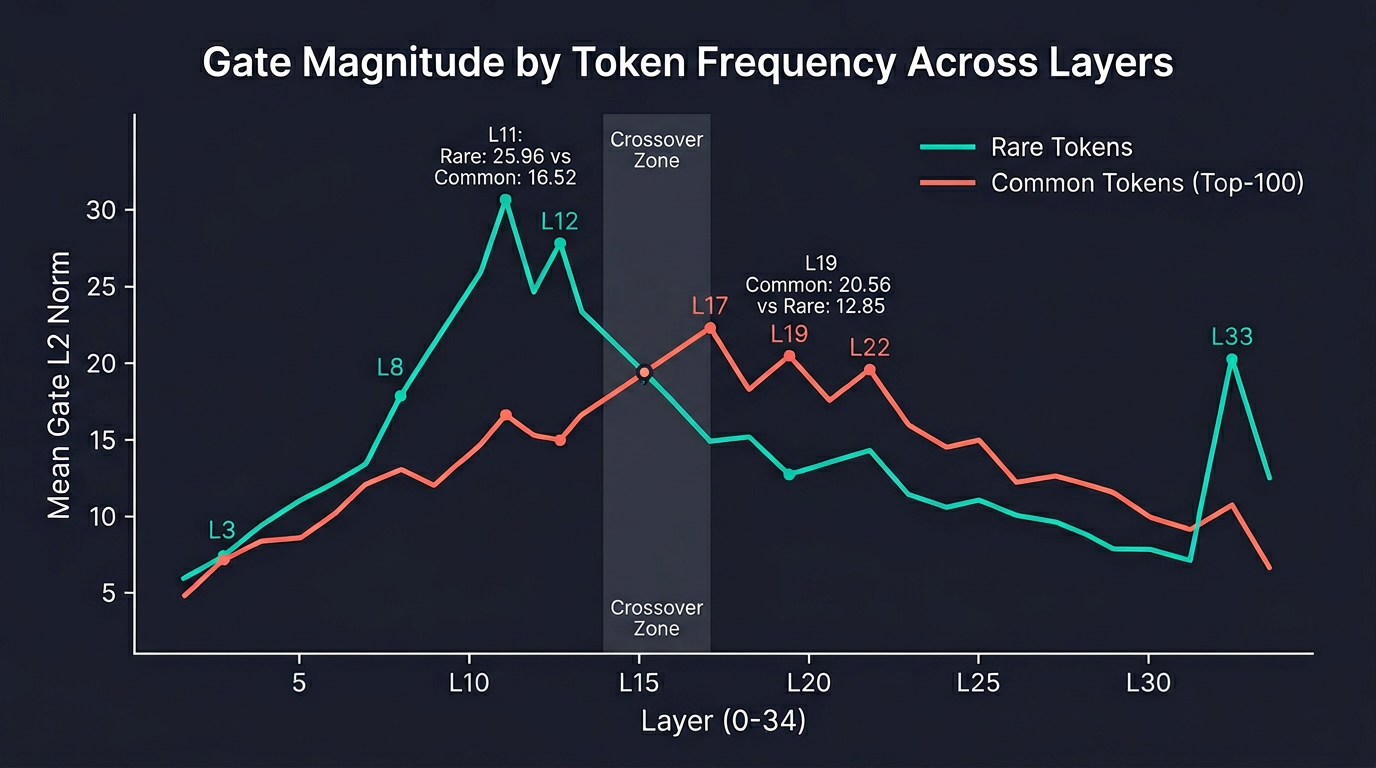

Then I looked at the magnitudes.

Phase 2: How Much Does the Gate Actually Change the Residual Stream?

Phase 2 shifted from asking what kind of information the gate carries to asking how large the injection is and which direction it points.

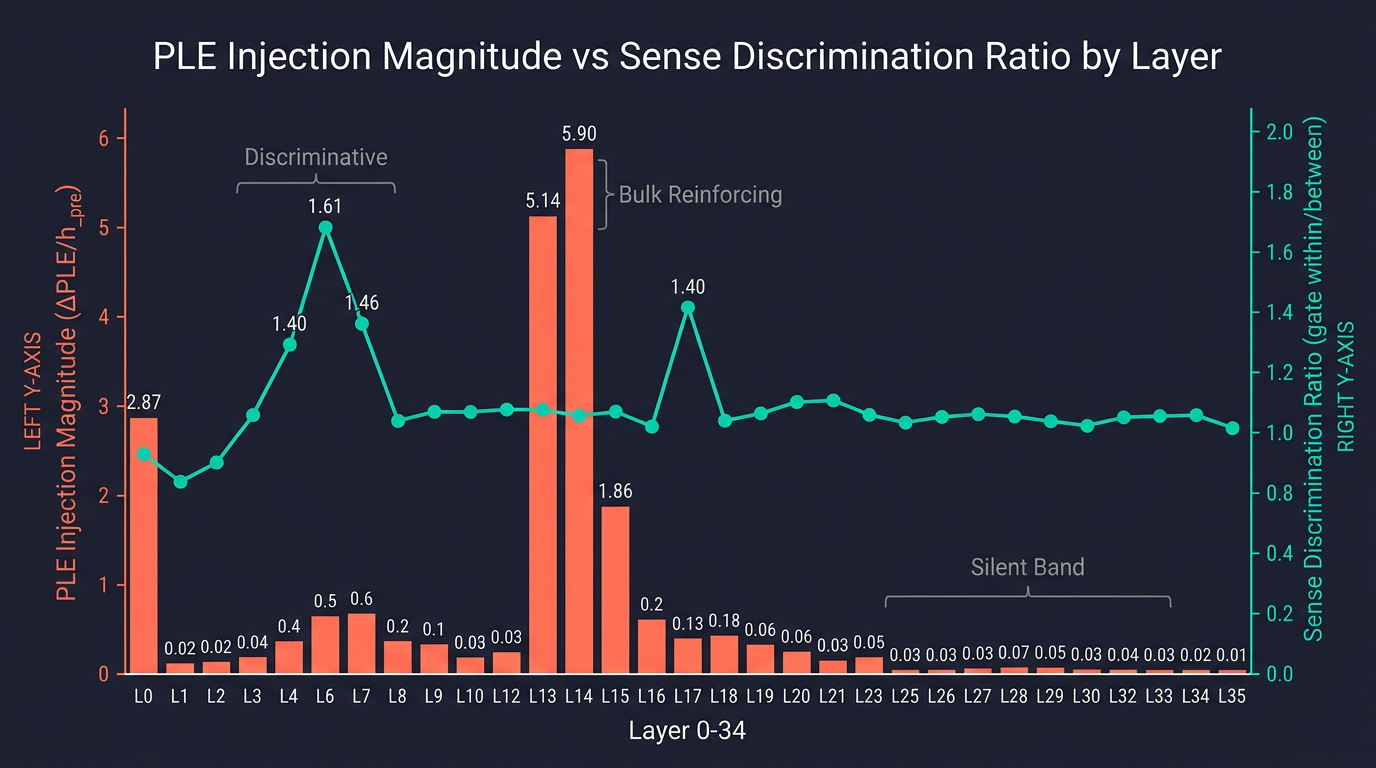

I measured ‖ΔPLE‖ / ‖h_pre‖ for 100,000 C4 tokens across all 35 layers. This is the L2 norm of the PLE contribution divided by the L2 norm of the residual stream before injection.

The result was not what I expected.

| Layer | Residual Norm | PLE Injection Norm | Ratio | Regime |

|---|---|---|---|---|

| L0 | 39.3 | 112.7 | 2.87 | One-shot bootstrap |

| L1 | 31.6 | 2.9 | 0.09 | Quiet |

| L6 | 60.6 | 12.1 | 0.20 | Discriminative |

| L13 | 49.4 | 255.5 | 5.14 | Bulk reinforcing |

| L14 | 45.0 | 262.8 | 5.90 | Bulk reinforcing |

| L15 | 63.6 | 117.9 | 1.86 | Bulk reinforcing tail |

| L17 | 58.1 | 17.9 | 0.31 | Discriminative |

| L25 | 64.9 | 4.0 | 0.06 | Silent band |

| L30 | 89.0 | 2.3 | 0.03 | Silent band (min) |

| L33 | 69.9 | 31.2 | 0.45 | Output-prior reinject |

PLE injection magnitude relative to the residual stream. 100k C4 tokens, bf16.

At Layers 13 and 14, the PLE injection is 5 to 6 times larger than the residual stream itself. This bias isn't subtle, it is the single largest representational shift in the model's forward pass.

Layer 0's ratio of 2.87 is the third largest event in the table, but it's a different case. L0 is the initial PLE conditioning of the residual stream, a one-shot bootstrap that sets up the token identity signal the rest of the model builds on. It is not the same kind of injection as L13/L14, and I haven't ablated it yet. The rest of this post is about that midnetwork injection.

This is gate magnitude, not the contribution ratio ‖ΔPLE‖/‖h_pre‖ in the table above. Those two measures can disagree at a layer because the up projection remaps the gate before the residual add. The 5–6× ratio spike at L13–L14 therefore appears in the table, not as a mid-network spike in this figure.

Meanwhile, Layers 25 through 32 form a silent band. The gate still fires with large activation norms, but the final PLE contribution is less than 7% of the residual. The gate machinery is running, but it's being used inhibitorily. Most of the gate work in late layers suppresses rather than injects.

The discriminative layer at L6 has a magnitude ratio of just 0.20, doing precision work with a tiny injection. L13/L14 are 25 to 30x larger.

Direction Alignment

I also checked what direction the PLE injection points relative to the layer's overall residual update. The question at hand was "Does it reinforce what the layer is already doing, or oppose it?"

| Layer | cos(ΔPLE, Δh) | Reading |

|---|---|---|

| L4 | +0.44 | Reinforcing |

| L13 | +0.26 | Reinforcing (high magnitude) |

| L14 | +0.34 | Reinforcing (high magnitude) |

| L15 | +0.54 | Strongly reinforcing |

| L17 | -0.07 | Opposing |

| L18 | -0.07 | Opposing |

The discriminative layer L17, the secondary sense disambiguation peak, is the exact point where the PLE delta turns opposing. The gate there is subtracting something the residual stream wanted to add.

L13/L14's injection is broadly aligned with the residual delta. It's reinforcing. But reinforcing what exactly...??

Linear Predictability from Token Identity

I checked how much of each layer's PLE contribution is predictable from token identity alone using a ridge regression with λ=1.0, regressing from the tokenId embedding features.

| Layer | Stage-1 R² | Combined R² | Reading |

|---|---|---|---|

| L0 | 0.37 | 0.42 | Moderate token-id dependence |

| L13 | 0.18 | 0.21 | Partially token-driven |

| L14 | 0.25 | 0.26 | Partially token-driven |

| L17 | 0.07 | 0.07 | Almost entirely context-driven |

R² is low everywhere, ranging from 0.07 to 0.42, but the pattern is pretty clear. The L13/L14 layers are among the most tokenId predictable of the layers. L17 is the opposite, with a low R² and a gate direction that opposes the residual delta. Whatever L17 is doing, it's almost entirely context driven.

This is the structural separation I was looking for! The discriminative band is small magnitude, context driven, and mixed/opposing in direction. The L13/L14 band is high magnitude, partially token driven, and aligned. They are different mechanisms sharing the same architectural slot.

Causal Ablation of the L13/L14 Spike

Heres the idea - If the L13/L14 spike is the largest single arithmetic event in the residual stream, it must be important...Right?

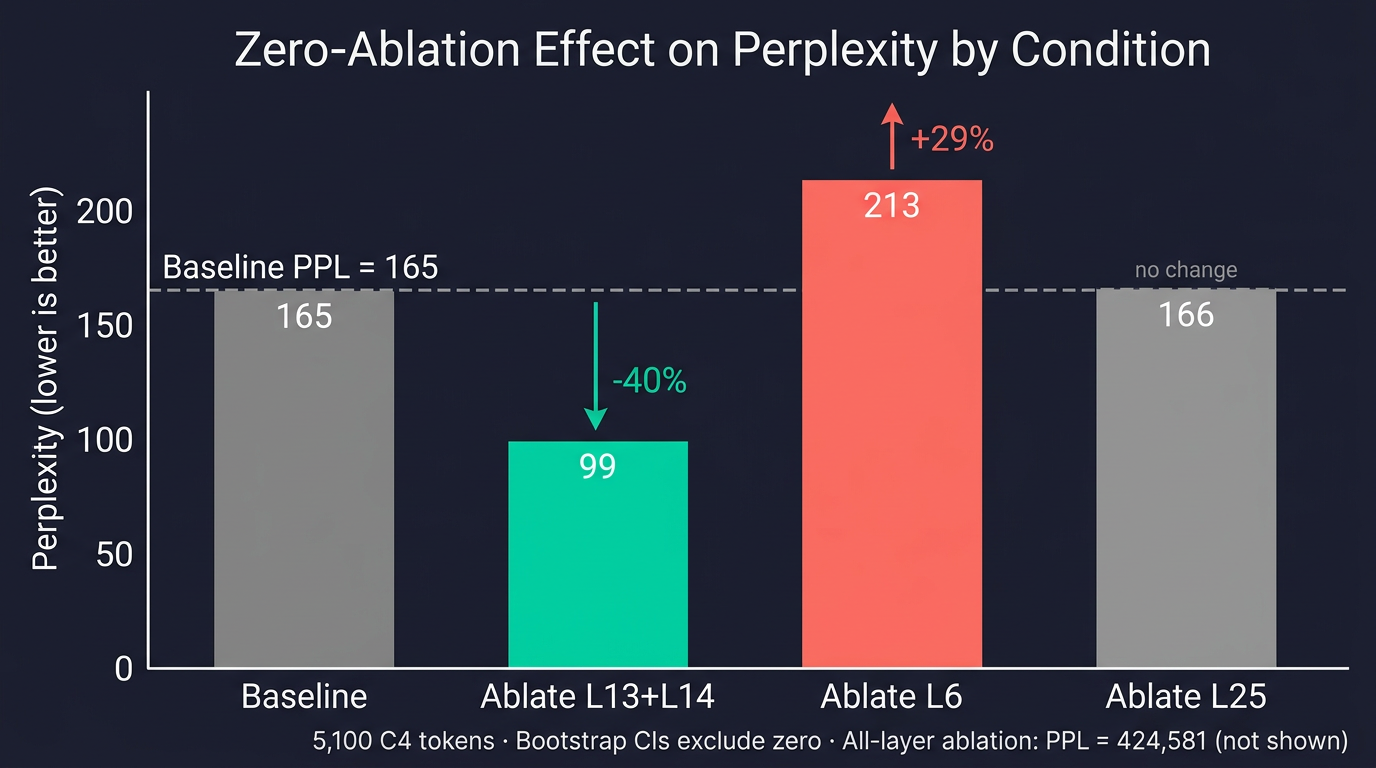

I ran a zero ablation experiment, forcing the gate output to zero at specific layers by hooking the per layer act_fn modules and measuring the change in next-token prediction loss on 5,100 C4 tokens. Five conditions, same input, controlled comparison.

| Condition | Layers Ablated | Mean NLL | Perplexity | Change |

|---|---|---|---|---|

| Baseline | 0 | 5.108 | 165 | |

| Ablate L13+L14 | 2 | 4.596 | 99 | -40% PPL |

| Ablate L6 | 1 | 5.362 | 213 | +29% PPL |

| Ablate L25 | 1 | 5.114 | 166 | +0.6% PPL |

| Ablate All 35 | 35 | 12.959 | 424,581 | Representation collapse |

Note on absolute perplexity figures: the numbers in this initial table are from the original evaluation setup, which omitted the BOS token (add_special_tokens=False). These are inflated by approximately 3× relative to a BOS-corrected evaluation on the same corpus (baseline PPL 165 → 150 with BOS), and should not be compared against published benchmarks. The in-distribution ablation results in the robustness section below were fully rerun with BOS correctly prepended, and those are the numbers this analysis rests on.

Removing the L13/L14 injection makes the model better, and this holds even with in-distribution replacements on a BOS corrected evaluation.

The zero ablation result is a 40% relative PPL drop, but zero-ablation is an out-of-distribution intervention and that number is an upper bound on the true effect. The definitive figure comes from a BOS-corrected replication (see robustness section below): mean-ablation ΔNLL = −0.1305 nats on 501k tokens with BOS prepended to each document (P=1.000, 95% CI [−0.1348, −0.1265]). The corpus mean used in that run was also computed on the same BOS-corrected pipeline, so it is in-distribution for the corrected forward pass with no BOS/mean mismatch. The zero-ablation figures below are kept for context as an upper bound.

The controls in the zero ablation run behave correctly, which validates the experimental setup. L25 ablation is a no op, moving NLL by just +0.006. The silent band really is quite silent. L6 ablation hurts, raising NLL by +0.254. Full ablation causes representation collapse, raising perplexity from 165 to 425,000. PLE as a whole is deeply load-bearing.

The improvement in the zero-ablation run isn't concentrated on rare or common tokens. It's across the board.

| Frequency Bin | ΔNLL (L13+L14 Ablated) | 95% Bootstrap CI |

|---|---|---|

| Hapax (count=1) | -0.799 | [-0.920, -0.672] |

| Uncommon (2-3) | -0.386 | [-0.530, -0.234] |

| Common (4-9) | -0.222 | [-0.393, -0.046] |

| Very Common (≥10) | -0.534 | [-0.598, -0.470] |

Bootstrap CIs (n=2000, resampling tokens). All bins exclude zero. Negative ΔNLL means the model improves.

A super interesting suggestion by these data is that the original parameter efficiency hypothesis appears to be reversed in the sense that the model appears to not make efficient use of some layers, or at least they actively harm the outputs. Specifically, the L13/L14 spike hurts prediction for both the rarest and most common tokens on this distribution.

Phase 3: Robustness Battery

Does this survive sample size, precision, distribution, and in-distribution ablation?

The initial ablation ran on 5,100 C4 tokens in bf16 which was just to establish direction but isn't nearly robust enough on its own as it introduces several concerns. Firstly, the sample is far too small, bf16 might introduce numerical artifacts related to precision alone, the effect could be C4-specific, and zero-ablation (replacing gate activations with zeros) is out-of-distribution. IOOW - What if the improvement is just the model responding to an OOD input rather than reflecting something harmful in L13/L14's specific direction?

I ran a full robustness battery addressing each of these in turn.

Sample size and precision. I reran the ablation at 50k tokens, in fp32 on CPU, and on math and code corpora.

| Run | Tokens | Baseline NLL | Ablate L13/L14 | ΔNLL | Ablate L6 ΔNLL | Ablate L25 ΔNLL |

|---|---|---|---|---|---|---|

| C4 5k bf16 (original) | 5,100 | 5.108 | 4.596 | -0.512 | +0.254 | +0.006 |

| C4 50k bf16 | 51,000 | 5.531 | 4.917 | -0.614 | +0.243 | +0.012 |

| C4 5k fp32 CPU | 5,100 | 5.127 | 4.606 | -0.521 | +0.219 | +0.007 |

| Code 25k bf16 | 25,500 | 3.388 | 3.269 | -0.119 | +0.160 | +0.008 |

| Math 25k bf16 | 25,500 | 4.414 | 4.100 | -0.314 | +0.141 | +0.004 |

The controls behave correctly across every run. L25 is always a no-op. L6 always hurts. L13/L14 always helps overall. Scaling from 5,100 to 51,000 tokens strengthens the effect from -0.512 to -0.614 and collapses every bin's bootstrap CI to P=0.000. Running fp32 on CPU with the same 5,100 tokens gives Δ = -0.521, within 0.9% of the bf16 number, so the precision story is dead. Math text behaves like English at Δ = -0.314 with every frequency bin helped. Code helps overall at Δ = -0.119 but contains a partial exception worth its own discussion.

In-distribution ablation, BOS-corrected. The more fundamental concern is whether zeroing the gate output is too disruptive to be informative, and whether the corpus mean is truly in-distribution when the baseline was missing the BOS token. I addressed both by rerunning the full ablation control experiment with BOS prepended to every document (add_special_tokens fixed), computing the corpus mean on the first 250k tokens of the same BOS-corrected stream, and evaluating on the held-out remaining 250k. The mean is therefore in-distribution for the corrected forward pass.

| Ablation type | ΔNLL (BOS-corrected) | 95% CI | P(<0) |

|---|---|---|---|

| Zero | -0.451 | [-0.459, -0.444] | 1.000 |

| Mean (corpus mean, cross-validated) | -0.1305 | [-0.1348, -0.1265] | 1.000 |

| Resample (shuffled real activation) | -0.1462 | [-0.1509, -0.1415] | 1.000 |

All three ablation types improve NLL with P=1.000. The BOS correction reduces the effect size modestly (zero: −0.591 → −0.451; mean: −0.159 → −0.1305) but does not change the sign or significance. The attention sink argument (that missing BOS corrupts the activation geometry and makes the effect unreliable) is directly answered here too... In a properly formatted forward pass, with an in-distribution corpus mean computed on the same setup, mean ablation still shows −0.1305 nats improvement.

The Code Distribution Sign Flip

| Run | Hapax (1) | Uncommon (2-3) | Common (4-9) | Very Common (≥10) |

|---|---|---|---|---|

| C4 5k bf16 | -0.799 | -0.386 | -0.222 | -0.534 |

| C4 50k bf16 | -0.738 | -0.519 | -0.394 | -0.654 |

| C4 5k fp32 CPU | -0.801 | -0.391 | -0.224 | -0.549 |

| Code 25k bf16 | -0.475 | -0.153 | +0.322 | -0.139 |

| Math 25k bf16 | -0.601 | -0.177 | -0.117 | -0.335 |

On C4 and math, every frequency bin shows the ablation helping. On code, the common bin with frequency 4-9 flips sign at +0.322 with P=1.000. Ablating L13/L14 hurts medium frequency code tokens even though it helps every other bin and helps overall.

This is the first concrete evidence that the L13/L14 injection is doing something distribution specific rather than being a uniformly removable scaffold. One plausible reading is that the high magnitude tokenId signal at L13/L14 shapes a prior that helps when the next token falls within the model's narrow medium frequency code keyword predictive distribution, things like language keywords, common identifiers, and punctuation chunks, but pulls in the wrong direction for the unbounded vocabulary of natural language.

The multilingual picture confirms the distribution-specificity. I ran the same zero-ablation on 50k tokens of German C4 and 50k tokens of Chinese Wikipedia:

| Language | ΔNLL (ablate L13+L14) | SE | P |

|---|---|---|---|

| English (500k) | -0.614 | 0.003 | P(<0)=1.000 |

| German (50k) | -0.255 | 0.011 | P(<0)=1.000 |

| Chinese (50k) | +0.042 | 0.014 | P(>0)=0.999 |

On Chinese Wikipedia, the sign flips. Ablating L13/L14 now hurts the model. Whatever L13/L14 inject is net-harmful on English and German but net-helpful on Chinese. The implication is that L13/L14 cannot be uniformly pruned at inference time. A language-conditional mask would be required.

Benchmark impact. NLL improvement could still be a "free performance" illusion if the model generates worse or more repetitive text. I evaluated 500 C4 prompts with 128-token greedy continuations and 1,000 examples each from MMLU and HellaSwag:

| Condition | MMLU | HellaSwag | Rep. rate |

|---|---|---|---|

| Baseline | 52.6% | 56.6% | 0.425 |

| Ablate L13+L14 | 50.9% | 54.2% | 0.437 |

| Ablate L33 | 46.7% | 57.7% | 0.376 |

The L13/L14 ablation causes a -1.7pp drop on MMLU and -2.4pp on HellaSwag, but both differences fall within the ±1.6pp bootstrap SE and the confidence intervals substantially overlap. The NLL improvement does not come at a measurable accuracy cost at this sample size. The repetition rate increases slightly (+1.2pp), which is real but small.

L33: The Output Prior Reinjection

After establishing that the L13/L14 injection is net-harmful on English, I tested the other structurally unusual layer, L33. This is the single PLE layer that breaks the long line of silent layers. From L25 through L32, the gate fires with large activation norms but the PLE contribution to the residual stream is less than 7% of the stream norm, indicating the model is suppressing the PLE pathway. Then at L33, the ratio jumps back to 0.45.

I ran a zero-ablation of L33 on 25,500 C4 tokens alongside a replication of the L13/L14 ablation as a control.

| Condition | Mean NLL | Perplexity | ΔNLL |

|---|---|---|---|

| Baseline | 5.483 | 241 | |

| Ablate L13+L14 | 4.895 | 134 | -0.588 |

| Ablate L33 | 7.076 | 1,183 | +1.593 |

Ablating a single layer at L33 raises NLL by +1.593, a five-fold increase in perplexity despite its moderate injection magnitude of 0.45× the residual stream.

The damage is not spread uniformly. It concentrates steeply on rare tokens:

| Frequency Bin | Baseline NLL | Ablated NLL | ΔNLL |

|---|---|---|---|

| Hapax (count=1) | 9.59 | 12.94 | +3.35 |

| Uncommon (2-3) | 7.86 | 10.89 | +3.03 |

| Common (4-9) | 6.67 | 9.32 | +2.65 |

| Very Common (≥10) | 3.80 | 4.45 | +0.65 |

Hapax tokens lose 3.35 nats and very common tokens lose only 0.65 nats, a 5.2× difference. This is the signature of a model whose output distribution collapses toward common tokens when the late stage PLE signal disappears. L33 is the model's last injection point before the output head, and it maintains probability mass on rare tokens. Without it, the model doesn't know how to assign meaningful probability to anything it doesn't see constantly.

That zero ablation result is severe, but it overstates how much of L33's specific content is essential. The BOS-corrected robustness run (Phase 3 below) included a mean ablation of L33, replacing the gate output with its corpus mean. The result was +0.009 ΔNLL, barely above zero, and the resample ablation gave +0.012. When any plausible activation is substituted, the model adapts without measurable damage. What L33 cannot survive is the complete absence of an activation, not the removal of its specific direction. This separates L33 from L6, where resample ablation causes 69% of zero's damage, confirming L6's gate content is genuinely load-bearing. It also separates L33 from L13/L14, where mean ablation still helps (−0.1305), confirming the specific direction those layers inject is harmful. L33 is better understood as a norm balancing reinjection. The downstream computation requires something at that position to maintain correct residual stream scale, but the information content is largely interchangeable with the population mean.

This result inverts the naive reading of PLE importance. The causal hierarchy, sorted by how much ablating a single condition hurts the model, is:

| Rank | Layer | ΔNLL | Magnitude Ratio | Role |

|---|---|---|---|---|

| 1 (most harmful) | L33 | +1.59 | 0.45 | Output-prior reinject |

| 2 | L6 | +0.25 | 0.21 | Discriminative |

| 3 | L25 | +0.01 | 0.06 | Silent band (no-op) |

| 4 (helps to remove) | L13+L14 | -0.59 | 5.1/5.9 | Bulk reinforcing |

The ordering is exactly the reverse of what magnitude would predict. The loudest layers are the least useful. The quietest load-bearing layer is the most essential. Magnitude does not predict causal importance, consistent with prior mechanistic interpretability findings on outlier dimensions and high norm but task irrelevant attention heads, and replicated here at the level of an entire PLE injection.

Three Functional Roles

The experiments above reveal three distinct functional roles co-existing in the same architectural component.

| Role | Layers | Magnitude | Direction | Sense Discrimination | Token-id R² | Ablation Effect |

|---|---|---|---|---|---|---|

| Syntactic/Lexical | L4-L7, L17 | Small (0.1-0.6x) | Mixed, opposing at L17 | Correlates (L6: 1.61) but not causal (1.9% of damage) | Low (0.07-0.13) | Hurts model (+0.25 NLL) |

| Bulk Reinforcing | L13-L15 | High (1.9-5.9x) | Aligned | Near-zero (1.01) | Elevated (0.18-0.25) | Helps model on EN/DE (-0.59 NLL); hurts on ZH (+0.04) |

| Output Prior | L33 | Moderate (0.45x) | Aligned | Near-zero (1.02) | Low (0.11) | Severe (+1.59 NLL zero ablation; +0.009 mean ablation) |

These roles are independant and have different magnitudes, alignment properties and, causal effects. Treating the PLE gate as a single mechanism, which is how it tends to get described, is mechanistically wrong.

The strongest finding is at Layers 13 and 14 where the model injects a token identity signal that is 5 to 6x the size of the residual stream, broadly aligned with the layer's update, yet actively harmful for next-token prediction when removed, except on Chinese where the sign flips and on medium frequency code tokens where one bin also reverses.

There are three readings of what L13/L14 are doing, ordered from conservative to speculative:

-

Distributional prior. L13/L14 shape a nexttoken prior that helps when the output distribution is narrow and keyword dominated (code, possibly Chinese) but hurts when it's broad (English natural language, math). The mechanism is real and useful in certain regimes, but not useful enough to overcome its net cost on average English text.

-

Training scaffold. During end-to-end training, the optimizer found it useful to create a high magnitude, mid network token identity signal that downstream layers could learn to route around, compensate for, or selectively extract from. The injection exists because of optimization dynamics rather than because it helps at inference time. This inject-then-attenuate structure has been observed elsewhere in mechanistic interpretability work. It would explain why ablating L13/L14 improves NLL, the downstream network has already learned to discount the injection, and removing it simplifies their input.

-

Optimization artifact. The most conservative reading: L13/L14 exist because of a training dynamics event (a learning-rate transition, an early loss plateau) and have no interpretable function. The tokenId predictability and alignment are side effects of the training trajectory.

Distinguishing these three requires training checkpoint data, specifically the trajectory of PLE gate weights through the pretraining run. That data is not publicly available for Gemma-4, so the reading remains open. What the ablation data alone can say is that L13/L14's content is net harmful on English and German, net helpful on Chinese, and the result is robust to sample size, precision, and ablation type.

The practical implications are qualified by the Chinese sign flip:

- Conditional inference efficiency. On English and German text, removing L13/L14 improves NLL with no measurable benchmark cost. On Chinese, it doesn't. A language conditional gate could in principle realize the efficiency gain on Latin-script text while preserving the injection for Chinese.

- Distillation targets. When distilling or pruning Gemma-4 for English-primary deployments, L13/L14 are candidates for removal. For multilingual deployments, they are not.

- Architecture design. Future PLE-style designs might benefit from regularization that prevents the optimizer from creating high magnitude injections that are partially language conditioned and net harmful on the dominant training language.

One Component, Three Mechanisms

The PLE gate is not one mechanism. It is at least three architecturally colocated mechanisms with different magnitudes, alignments, and causal directions.

The syntactic/lexical role at L6 is small, high information density, and causally load-bearing. The gate at L6 correlates with word sense discrimination, meaning gate vectors cluster by sense more than the residual stream does, but that signal is a byproduct rather than the mechanism. What L6 is doing causally, based on subspace ablation, is syntactic scaffolding or lexical priming. Removing it hurts the model. The bulk reinforcing role at L13/L14 is high magnitude, low-information-density, and net-harmful on English and German. Removing it helps the model on those distributions, and that result survives 500k token scaling, fp32 precision, mean ablation, and resample ablation. On Chinese, the sign reverses, ablating L13/L14 hurts, which means the injection is language conditioned and cannot be universally removed. The output prior reinjection at L33 is the only late-layer PLE event that survives the silent band, and it is the most causally essential PLE layer in the model. Ablating L33 alone raises NLL by +1.59, six times worse than ablating L6 and in the opposite direction from ablating L13/L14. The damage concentrates 5.2× more on rare tokens than common tokens, consistent with L33 serving as the model's final opportunity to inject token identity information before the output head. The model's output distribution collapses toward common tokens without it.

The Chinese sign flip is the most important qualification. It means the L13/L14 injection is not a uniformly removable scaffold. It is doing something that helps on Chinese even while hurting on English and German, which is exactly the kind of distribution dependent behavior you would expect from a high-magnitude mechanism shaped by the optimizer across a mixed multilingual pretraining corpus.

The Phase 3 mean ablation results confirm that the harm to English NLL comes from the specific direction of L13/L14's injection rather than from disrupting a large activation. Replacing the gate output with its population mean on a BOS-corrected evaluation still improves NLL by −0.1305 nats (P=1.000 on 501k tokens), and the resample ablation agrees at −0.1462. The key remaining open question for L13/L14 is whether the content that helps on Chinese is the same content that hurts on English, specifically whether a subspace of the injection could be selectively preserved for multilingual use while removing the harmful component. For L33, the mean-ablation result (+0.009 ΔNLL) established that the model relies on activation presence and scale rather than specific information content. The remaining question is what determines that norm-balancing threshold and whether the rare-token sensitivity is type-specific or structural.

The broader takeaway is that a single architectural component typically described as a monolithic parameter efficiency device contains at least three mechanisms with different magnitudes, directions, domain dependencies, and causal effects. L13/L14 specifically is a high-magnitude injection that is net-harmful on the dominant training language yet net-helpful on Chinese and narrow keyword distributions. Whether that makes it a training scaffold, a language-conditioned prior, or an optimization artifact is a question the inference-time data cannot answer alone. What it does tell you is that treating PLE as a single thing to keep or remove is the wrong unit of analysis, and detecting these within-component asymmetries requires causal intervention, not just representational measurement.